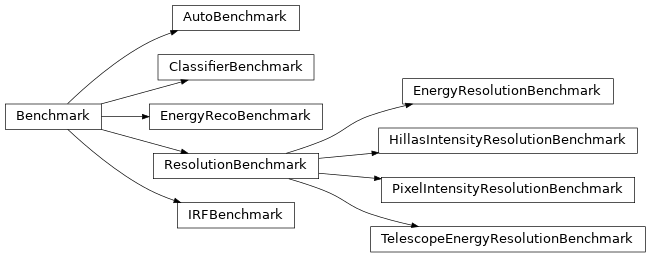

Benchmarks#

Benchmarks using DL1 information. |

|

Benchmarks using DL2 information. |

|

Benchmarks using DL3 information, e.g. IRFs. |

Base Benchmarks#

Pre-defined Benchmarks and Metrics.

- class ResolutionBenchmark(input_data_level: str, reco_column: str, true_axis, per_tel_type: bool, resolution_method: ~datapipe_testbench.benchmarks.resolution.ResolutionMethod = ResolutionMethod.RMS, reco_symbol: str | None = None, num_delta_bins: int = 100, delta_range: tuple[float, float] = (-10.0, 10.0), chunk_size=50000, max_chunks=None, filter_function=<function ResolutionBenchmark.<lambda>>, resolution_requirement_table: str | None = None, bias_requirement_table: str | None = None, bias_plot_range: tuple[float, float] | None = None)[source]#

Bases:

BenchmarkBase for benchmarks that measure the resolution of a measurement.

Initialize Resolution Benchmark.

- Parameters:

- input_data_levelstrq

name of input data in the InputDataset. Must be one of the fields in that structure.

- reco_columnstr

name of column in the event data file to use as the reconstructed quantity

- true_axisaxis

Histogram axis for the true axis of the dispersion. The name of the axis must be the name of a column in the input events data

- per_tel_typebool

True if this should produce results per telescope type

- resolution_methodResolutionMethod

How to compute resolution

- reco_symbolstr, optional

Alternate label for the quantity, which can be in latex. If specified, this will be used in the plot labels.

- num_delta_binsint

how many bins to use in the relative difference (delta) axis

- delta_rangetuple[float, float]

bin range for the delta axis

- chunk_sizeint

how many events to load per chunk. Higher is faster, but uses more memory.

- max_chunksint

maximum number of chunks to process, or None to process all.

- filter_functionCallable

function of f(true_values, reco_values) -> bool to filter what gets filled.

- resolution_requirement_tablestr, optional

name of table specifying the requirement in the datapipe_testbench resources. If specified, it will be plotted

- bias_requirement_tablestr, optional

name of table specifying the requirement in the datapipe_testbench resources. If specified, it will be plotted

- bias_plot_rangetuple[float, float], optional

range to use when plotting bias (in units of the reco_column). If not specified it will be automatic.

- compare_to_reference(metric_store_list: list[MetricsStore], result_store: ResultStore) dict | None[source]#

Perform the comparison study on metrics associated with a set of experiments.

This function takes a list of MetricStores that have been previously filled by

datapipe_testbench.benchmark.Benchmark.generate_metrics(), generates plots and comparison results, and writes the output to a ResultsStore. If there are more than one MetricStore to compare, the first one is considered the _reference_.- Parameters:

- metric_store_list: list[MetricsStore]

The list of MetricStores to compare, the first of which is the _reference_ .

- result_store: ResultStore

WHere the results of the comparison study are stored.

- generate_metrics(metric_store: MetricsStore) dict | None[source]#

Produce metrics for this benchmark later comparison.

Called once per benchmark per

MetricsStore, i.e. for one set of input events, defines how to transform the event into to a metrics stored in aMetricsStorethat can later be compared.- Parameters:

- metric_store: MetricsStore

Where to store the metrics generated by this

Benchmark. It must be initialized with andatapipe_testbench.inputdataset.InputDatasetcontaining the inputs to use for the transformation into metrics.

- property name#

Return friendly name of this benchmark.